A four-way intersection in a growing city can look orderly from a distance. Lanes are marked, signals change on schedule, and cameras hang from poles recording a constant stream of vehicles and pedestrians. The stress appears in the details: a wrong-way turn on a ramp, a bus braking hard to avoid a distracted pedestrian, or a near-miss between a motorcycle and a truck that never shows up in official statistics.

Many transportation agencies collect video and sensor data at these locations, but their systems often operate as passive recorders. Footage may be reviewed after an incident, and some rule-based tools monitor for simple violations, yet much of the risk in everyday traffic remains unmeasured and unmanaged.

The gap between what infrastructure sees and what it understands has become a central challenge for cities trying to improve safety and keep up with rising demand on roads and transit networks.

Skylark Labs, a New York-based artificial intelligence (AI) company founded in 2021, is one of a group of firms working to close that gap by shifting traffic intelligence to the edge of the network. Its transportation platform uses adaptive AI models that run directly on roadside devices, fleet units, and transit infrastructure, turning each node from a passive sensor into an active decision point.

The approach is being applied to more intelligent transportation systems that span roadways, public transit, and fleet operations, and it is built on the premise that traffic environments change too fast for static, centrally trained systems to keep up.

The New Face Of AI With Skylark Labs

Many people hear the phrase AI and think first of automation: faster queues at toll booths, streamlined ticketing, or more modern digital processes in transport systems. That view is not wrong, but it is narrow. Automation focuses on efficiency and throughput—moving more vehicles and people with less manual work.

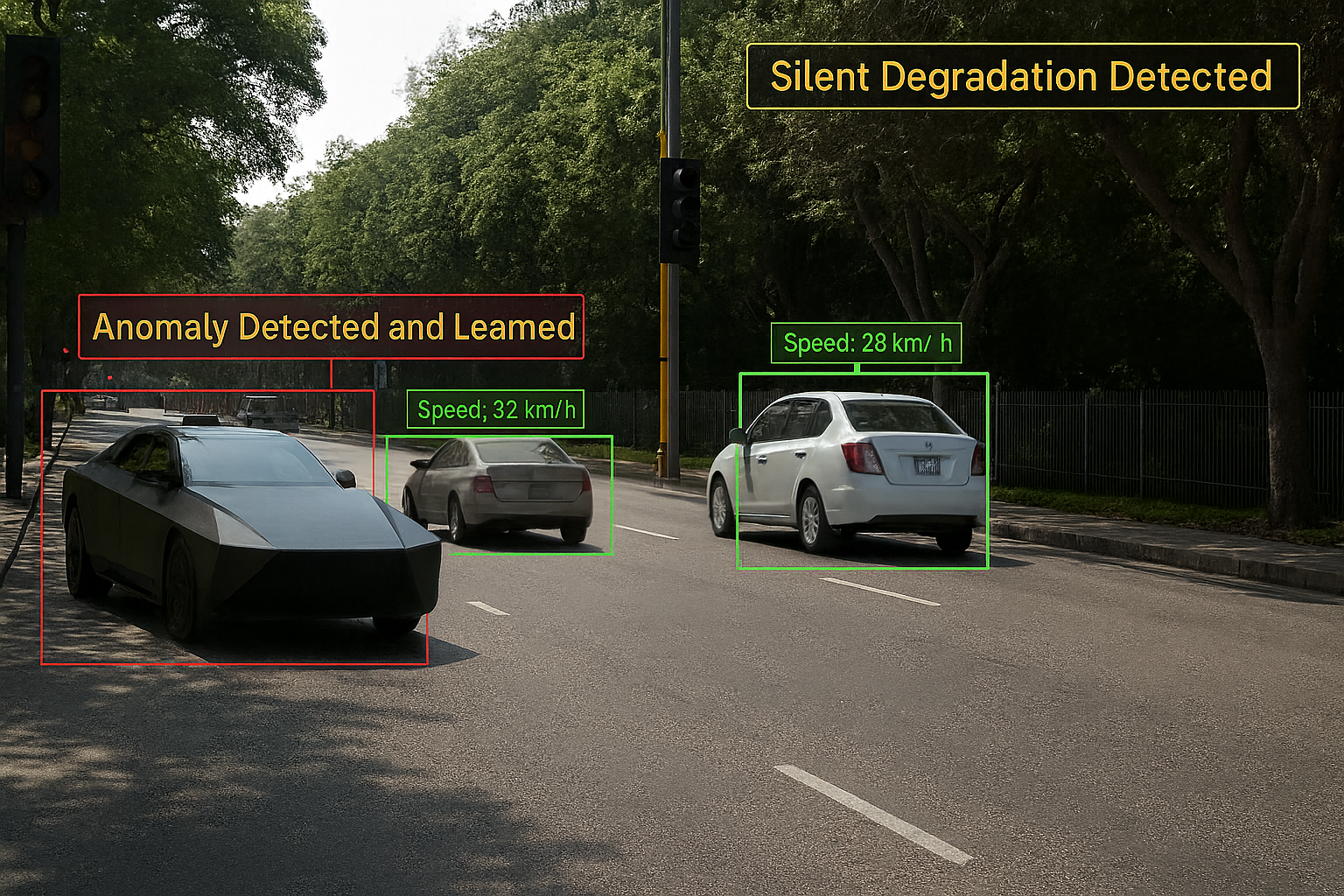

For Skylark Labs, AI has a broader role than automating transactions or streamlining control-room tasks. Its systems are designed to watch how traffic actually behaves on the road—tracking wrong-way vehicles, harsh braking, lane drifting, near-miss events, and risky interactions between cars and pedestrians.

That information feeds into continuous monitoring, where AI models running on devices at intersections, along highways, and inside vehicles help surface safety issues that might not appear in traditional crash reports. The emphasis shifts from moving people faster to understanding where and why they may be at risk.

In its work, AI is applied to tasks that directly affect safety, monitoring, and accident prevention, rather than solely to back-office optimization. Models running on edge devices continuously monitor for wrong-way vehicles, hazardous maneuvers, near-miss events, and patterns that indicate fatigue or dangerous driving, even when connectivity is limited.

The result is an AI layer that helps agencies see emerging problems, intervene earlier, and design safer infrastructure over time. Skylark Lab’s founder, Dr. Amarjot Singh believes that with this approach, AI does not just make systems faster; it broadens what transportation networks can perceive and how they protect people using them.

AI That Adapts To Change

AI has been layered onto this infrastructure, but often in a way that reproduces the same dependencies. Many AI deployments in transportation are trained in a lab, installed in the field, and left to run until performance drops. Updating them means collecting new data, retraining models centrally, and redeploying software across the network. That cycle can be slow and expensive, and it does not always fit the constraints of agencies working with tight budgets and limited technical staff.

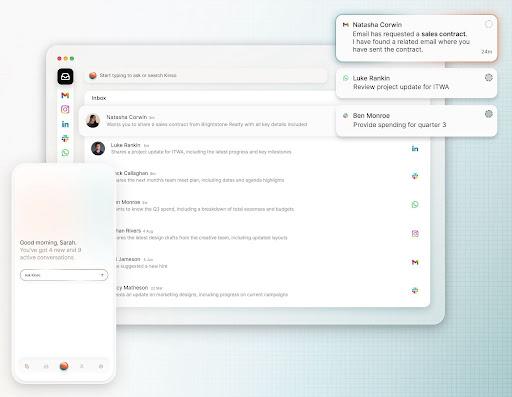

Skylark Labs’ transportation offering is structured to address these limitations. Instead of treating cameras and roadside units as feeders to a central system, it places adaptive AI models on edge hardware deployed at intersections, along highways, and inside vehicles.

They analyze live video and sensor feeds to detect violations, hazards, and risk behaviors, while a monitoring layer checks for unfamiliar scenarios and triggers local updates when needed.

It is built around an edge-native design, where models run directly on devices installed in the field rather than in distant data centers. Its transportation stack operates on hardware such as the Synapse AI Box, Sentinel AI Camera, and Scout AI Tower, which sit alongside existing infrastructure at intersections, along highways, or on fleet vehicles. By placing computation close to where data is generated, the system can interpret conditions as they unfold, turning cameras and sensors from passive recorders into active decision points.

Because it is adaptive AI, it does not remain fixed once deployed. It learns continuously from live traffic flows, driver behavior, and changing roadway scenarios instead of waiting for scheduled, centralized retraining cycles. When new vehicle types appear, road layouts change, or behavior patterns shift, the models adjust their internal parameters in the field. This allows the system to maintain useful performance over time, even as conditions diverge from those seen during initial training.

To support this, Skylark Labs’ adaptive AI ingests and analyzes inputs from multiple sources, including CCTVs, vehicle sensors, and radar or LiDAR feeds. It processes these streams to detect wrong-way vehicles, pedestrian hazards, near-miss incidents, and behaviors associated with accident risk in real time. The focus is not limited to clear violations; it extends to early indicators of stress on the network, such as repeated harsh braking or risky interactions at specific points in the system.

According to Dr. Singh, a key element of the design is its minimal dependence on remote cloud resources. Because most processing and adaptation occur on the edge devices themselves, the system remains operational during network outages or periods of degraded connectivity.

Only selected events and summaries need to be transmitted, which reduces bandwidth demands and ongoing operational costs. Keeping analysis on-site also limits how much sensitive video and sensor data leaves the local environment, giving agencies more control over storage, access, and compliance with data protection requirements.

What Adaptive AI Signals For The Future Of Mobility

The use of adaptive, edge-based AI in transportation carries implications beyond the technical architecture. For agencies, it offers a way to upgrade existing infrastructure rather than replace it by integrating devices into current intelligent transportation systems and camera networks. This can reduce capital expenditure and allow incremental deployment, starting with high-priority corridors or nodes.

Operationally, the approach aims to shift emphasis from recording what went wrong to detecting where things are likely to go bad. For transportation systems, this means monitoring remains useful as roads, fleets, and rider patterns evolve, helping agencies move from static, after-the-fact analysis toward continuous, real-time risk management—without depending on constant connectivity or frequent large-scale retraining cycles.

For Dr. Singh and the Skylark Labs’ team, transportation is a natural proving ground for its adaptive AI technology. The environments are dynamic, the stakes are clear, and the infrastructure is already partially instrumented.

Their idea is straightforward: a transportation system becomes smarter not only by adding more sensors, but by enabling infrastructure to interpret what it sees and learn from it over time. Skylark Labs’ adaptive AI at the edge is one way of moving in that direction, turning intersections, vehicles, and corridors into active participants in how cities understand and manage mobility, rather than silent observers of whatever traffic happens to pass by.